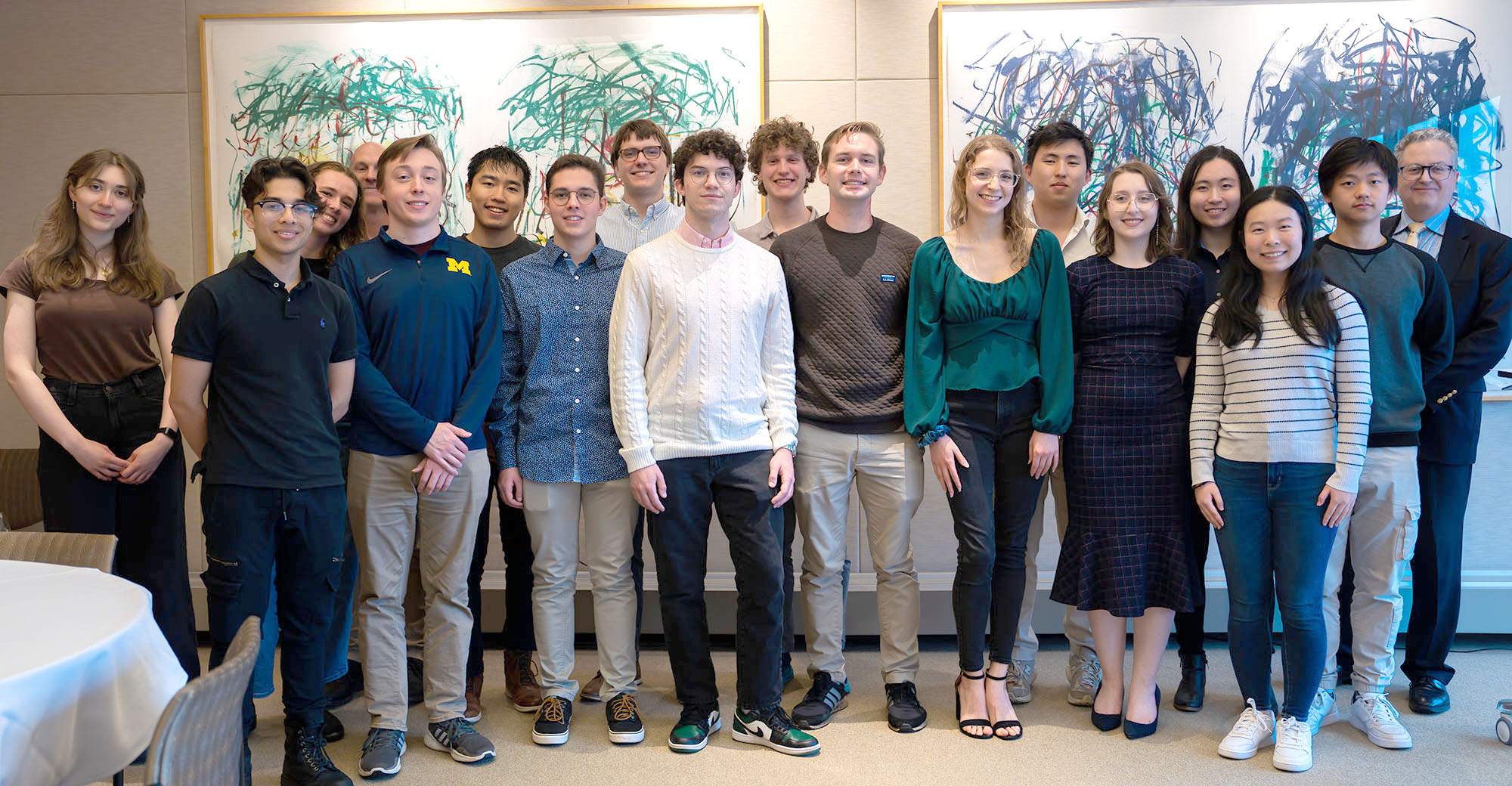

2024 Undergraduate Student Awards

The Department of Electrical Engineering and Computer Science congratulates the recipients of the 2024 EECS Undergraduate Student Awards and the College of Engineering awards. These awards recognize students who excel in scholarship, research, service, leadership, and entrepreneurship. Professors Dennis Sylvester, Interim Chair of ECE and and Michael Wellman, Richard H. Orenstein Division Chair of CSE, presented the awards to the students.

Where possible, we’ve shared memories from the students on their time at U-M and in EECS.

EECS Award Recipients

These students were selected to receive the following EECS Undergraduate Student Awards.

Outstanding Achievement Award

This award is presented by the EECS Department to an outstanding senior student from each of the three programs of study (EE, CE, and CS) in EECS. Students are selected on the basis of outstanding overall academic and personal excellence.

Alyssa Anderson

Electrical Engineering

“During my time at U-M, I specialized in neuromodulation therapies through a double major in Biomedical and Electrical Engineering. In this area, I’ve spent almost three years working with the Neuromodulation Lab, assisting in creating patient specific spinal cord models to better understand the mechanisms behind pain and pain-alleviating therapies. Outside of academics, I’ve had the pleasure of volunteering through G.R.O.W. Tutoring, SWE, and WECE, and, on a more personal level, I spent a few years running the ionic Friday teas as the Martha Cook Service Chair—something which I hope to continue during my graduate studies.”

Daniel Li

Computer Science

“Some of my favorite memories are chatting with friends and faculty during office hours. Working on graph algorithms with Prof. Thatchaphol Saranurak and Prof. Greg Bodwin has been a great learning experience. I am grateful for their dedication to teaching.”

Joseph Maffetone

Computer Engineering

“During my time at U-M, I’ve participated in several student project teams, namely Michigan Robotic Submarine and currently the Michigan Mars Rover team. I’ve enjoyed being an IA for EECS 373, and in general have developed a strong interest in embedded systems through classes and extracurriculars. I’ve completed internships at Grainger and the IoT startup Frost Solutions, the latter of which I now work at part time as a software engineer. I’m very proud of my contributions to the team as the company continues to grow, and my overall growth as an engineer over the past few years.”

Outstanding Research Award

This award is given to students who completed an outstanding research project with a faculty member or graduate student over and above the requirements of a course or an independent project.

Marwa Houalla

Computer Science

“I am a Master’s candidate in Computer Science and Engineering. Prior to this, I served as an instructor for EECS 281 and currently serve as an instructor for EECS 497. My research is driven by a commitment to understanding and improving the ways in which technology affects our lives and society at large. Last year, I completed an honors thesis under the guidance of Professors Fabiana Silva, Hector Garcia-Ramirez, and reader Dr. David Paoletti. My work involved applying natural language processing techniques and web crawling to extract and classify the content of job recommendations from LinkedIn. I am eager to continue this trajectory of research and application.“

José Luiz Vargas de Mendonça

Computer Engineering

“During my time at U-M, I was involved in the Avionics team at the Michigan Aeronautical Science Association, participated at the Michigan Taekwondo Club and the Japan Student Association. My favorite classes here were EECS 483, where I learned how to build a compiler using RUST, and AERO 623, where I worked in groups implementing different numerical solutions for fluid dynamics problems. I have also been involved in Programming Languages research at the MARVL Lab and Quantum Computing research on the CAFQA Lab, which motivated me to apply for graduate school.”

Jeremy Shen

Electrical Engineering

“I am an electrical engineering student in Professor Hovden’s group. At Hovden lab, I study 2-dimensional quantum materials with charge density waves and how their associated structural distortions manifest on transmission electron microscopes. I also perform electrical characterization of transition metal dichalcogenides. The result of my ex-situ transport experiments on TaS2 is published in Nature Communications. I also currently serve as the Executive Vice President of the Triangle Engineering Fraternity.”

Outstanding Service Award

This award is presented to students who have shown exceptional leadership in their student organizations, service to the University, College, or Department, or service to the community.

Elham Islam

Computer Engineering

“Some of my favourite experiences and proudest accomplishments have come from the work I’ve done on our school’s rocketry team, MASA. I worked on the avionics subteam for the past 3 years and nothing has been more gratifying than finally seeing our rocket, Clementine launch past summer. This project and my internships at MIT Lincoln Laboratory and NASA JPL have definitely allowed me develop my skills as an engineer tenfold and opened up plenty more doors for me to explore. But most of all, being surrounded by incredibly driven and curious people has definitely been the biggest part of UMich I am grateful for and I hope to seek a similar

community wherever I go.”

Adviti Mishra

Computer Science

Amanda Whitley

Electrical Engineering

Amanda Whitley’s favorite class was EECS 461, Embedded Control Systems. In her time at U of M, she has gotten heavily involved in the Society of Women Engineers (SWE). She helped host a conference for women leaders, and contributed to countless STEM outreach events for K-12 and college-aged students. Amanda is also the treasurer of a recreational dance organization, called New Movement Dance Company, where she has taught three semester-long classes and has taken many more.

Commercialization/Entrepreneurship Award

This award is presented to a student who exemplifies a partnership between engineering and business through involvement in or startup of a private business, patents, or partnerships with corporations, furthering their field of knowledge or interest.

Miranda Baltaxe

Computer Engineering

“I am a Computer Engineering major originally from Arlington, VA. When I’m not on North Campus, I can be found at NewHaptics, a UMich spinoff company dedicated to increasing access to digital information for people who are blind, or off campus hanging out with teammates on Flywheel, Michigan’s Women’s Ultimate Frisbee team. Some highlights of my college experience include working with a super talented team to create a virtual study tool for blind students in EECS 473 and placing top-3 at the Centex dance competition twice with Flywheel.”

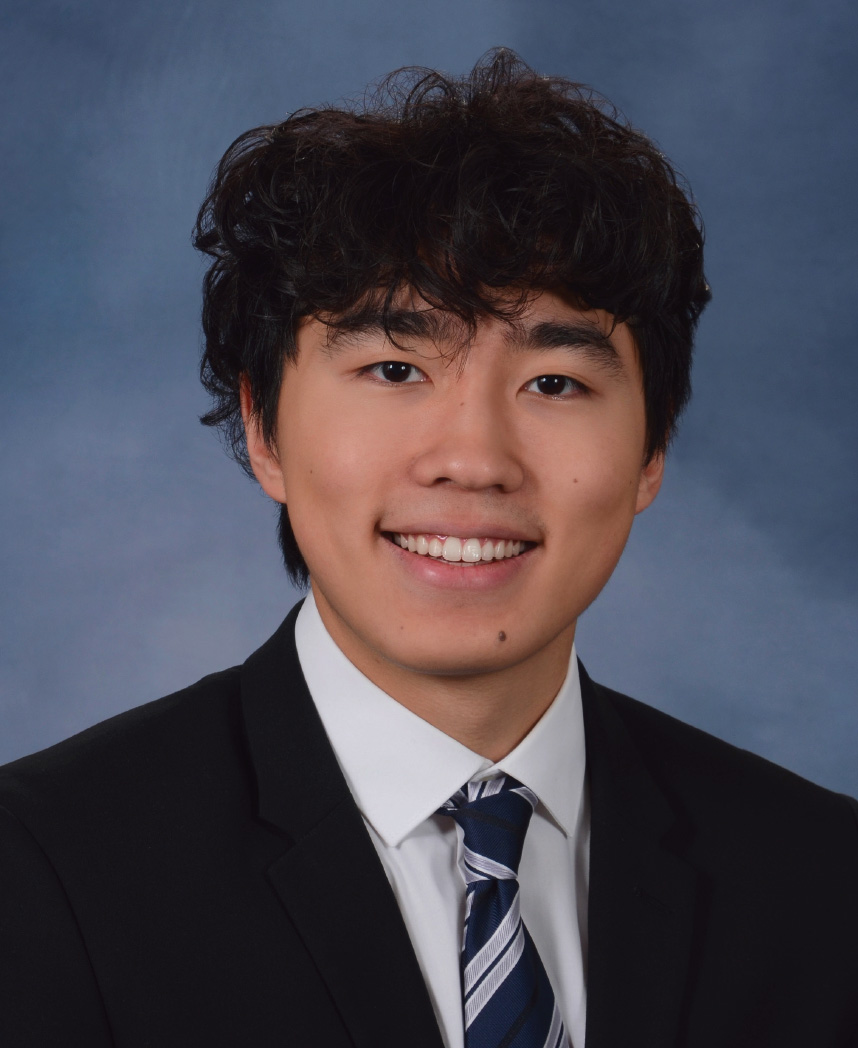

Kevin Ji

Computer Science

“At the Michigan, I was on the Executive Board of the Michigan Mars Rover Team, a project team here on campus, and Capital Consulting Group, a pro-bono consulting club. Being in Michigan Engineering taught me the analytical and problem solving skills needed to solve challenges. Through my experiences both on and off campus, I realized that I could combine both my technical skills and business acumen to deliver the biggest impact. As such, I have taken roles which combine both areas, most recently conducting a strategy and transformation project for a large F500 company focusing on to improving patient experiences. One of the great things about being at Michigan is seeing so many great people with a wide range of diverse backgrounds and skills. I love meeting and learning from others, and doing so has really influenced how I want to leave a positive impact on the world.”

Kevin Zheng

Electrical Engineering

William L. Everitt Student Award of Excellence

This award is presented by the EECS Department to a top student from each of the three programs of study (EE, CE, and CS). These students are in the top 10% of their class and have an interest in communications and computers, as well as professional interests and activities.

Amy Liu

Electrical Engineering

“At U-M, I’ve had the opportunity to engage in a variety of experiences both in and out of the classroom. As a part of the Michigan Mars Rover team and through internships in RF communications, I’ve learned a lot about real-world applications of electrical engineering. I’ve also been involved in teaching and in science outreach, which are endeavors I’d love to continue in the future.”

Andrea Liu

Computer Engineering

“I’m majoring in computer engineering and minoring in music. Outside of class, I worked as an IA for EECS 270 and EECS 370, and I play the violin in the Michigan Pops Orchestra and Campus Symphony Orchestra. My experiences at U-M have opened up other opportunities for me, such as my internships at Los Alamos National Lab and Roblox.”

Zachary Weiss

Computer Science

Zachary is majoring in Computer Science with a minor in Entrepreneurship. Throughout his time at Michigan, he was an instructional aide for EECS 485 and EECS 281, and he was involved with various entrepreneurial organizations on campus. Zach specialized in backend distributed computing and hopes to be able to work in that field full time.

William Harvey Seeley Prize

This award is presented to an electrical engineering student who stands first in his/her class at the completion of the freshman year.

Anyun Hsu

Electrical Engineering

“During my time at U-M, I served as a learning assistant in the physics help room. Collaborating with my fellow students to better understand course materials turned out to be one of the most rewarding experiences during my college years. Additionally, I thoroughly enjoy my involvement in Konnect, the co-ed k-pop dance group on campus, and actively participate in the Michigan Wolves Chinese Basketball Women’s Team.”

Jeremy Shen

Electrical Engineering

“I am an electrical engineering student in Professor Hovden’s group. At Hovden lab, I study 2-dimensional quantum materials with charge density waves and how their associated structural distortions manifest on transmission electron microscopes. I also perform electrical characterization of transition metal dichalcogenides. The result of my ex-situ transport experiments on TaS2 is published in Nature Communications. I also currently serve as the Executive Vice President of the Triangle Engineering Fraternity.”

Collaboration, Respect, and Inclusion Award

This award is given to students who have demonstrated outstanding commitment and made significant contributions to advancing diversity, equity and inclusion with clear impacts as a result of these efforts.

Brinda Kapani

Electrical Engineering

“I’ve been a part of the U-M Autonomous Robotic Vehicle project team since I started at Michigan, and it cemented my love for electrical engineering! I also enjoy volunteering with kids and spreading STEM at SWE events, BookPals tutoring, and Elementary Engineering Partnerships sessions. I’ve able to give back to the U-M community through Engineering Student Government and as an IA for my favorite class–Robotics 102. I love to find new study spots on North Campus, and my current favorites are (anywhere) in the Ford Robotics Building and in the new EECS undergraduate lounge!”

Dora Kuflu

Computer Engineering

“I am a second year Computer Engineering major, and I am an active member of SPARK, Snowboarding Club, and Michigan International Student Society. My favorite classes so far are EECS 370 and 270, and I will hopefully be pursuing an internship over the summer.”

Reese Liebman

Computer Science

“I am a junior majoring in Computer Science, but I’m currently pursuing the semester-long IPE Tech Career Accelerator in Prague to supplement my pursuit of the International Minor for Engineers! Additionally, I am a member of the Engineering Global Leadership (EGL) program within Engineering Honors, and actively engage in local and global outreach through the Society of Women Engineers (SWE). I have prior internship experience in full stack development and applied ML, and am particularly passionate about CS theory and its applications in security. Last semester, I was a CSE Peer Advisor and an IA for EECS 376, both of which were incredibly fulfilling roles that I hope to pursue when I return to Ann Arbor!”

Community Impact Award

This award is given to students who enhance departmental excellence by fostering a supportive climate, promoting collaborative work and learning, and investing time and energy to create a sense of belonging for all members of our community.

Elijah Becker

Computer Engineering

“I have enjoyed my time studying Computer Engineering at U-M. My favorite courses include EECS 373, 473 & EECS 482, all of which are essential courses for anyone going into embedded software development. My main extracurricular involvement has been with Cru, a campus ministry here at U-M. I have met my best friends here and found an amazing community. This has definitely has been one of the most important parts of my time at college.”

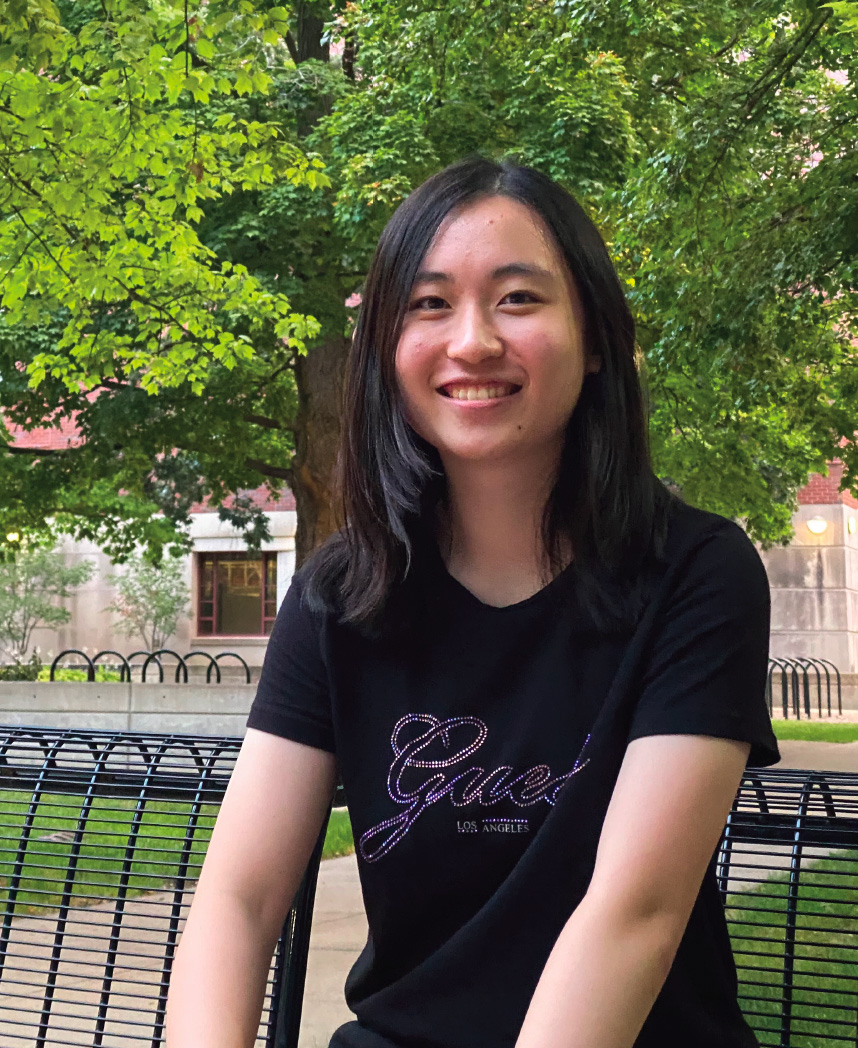

Janet Jiang

Computer Science

Samuel Nolan

Electrical Engineering

Samuel Nolan is a member of the prestigious engineering honor societies TBP and HKN. They have worked extensively with BlueStamp Engineering to introduce middle school and high school students to robotics and currently serve as an Instructional Aide for EECS 311, demonstrating their commitment to both academic excellence and community engagement.

College of Engineering Award Recipients

These students were selected to receive the following College of Engineering Awards.

Distinguished Academic Achievement Award

This award is presented to an outstanding student from each program offered within the College of Engineering. Students are selected on the basis of academic and personal excellence.

Andrea Liu

Computer Engineering

“I’m majoring in computer engineering and minoring in music. Outside of class, I worked as an IA for EECS 270 and EECS 370, and I play the violin in the Michigan Pops Orchestra and Campus Symphony Orchestra. My experiences at U-M have opened up other opportunities for me, such as my internships at Los Alamos National Lab and Roblox.”

Ibrahim Musaddequr Rahman

Computer Science

Ibrahim currently teaches EECS 388, does applied cryptography research, and works as an RA in the dorms. He is an External Vice President of the Engineering Honor Society, Tau Beta Pi , serving as an organizer for the Leaders & Honors Awards Brunch and the Fall Engineering Career Fair. Last year, Ibrahim presented a swarm robotics paper, titled “Imitating Swarm Behavior by Learning Agent Level Controllers” at the American Controls Conference (ACC) in San Diego.

Samuel Nolan

Electrical Engineering

Samuel Nolan is a member of the prestigious engineering honor societies TBP and HKN. They have worked extensively with BlueStamp Engineering to introduce middle school and high school students to robotics and currently serve as an Instructional Aide for EECS 311, demonstrating their commitment to both academic excellence and community engagement.

Distinguished Leadership Award

This award is presented to undergraduate or graduate students who have demonstrated outstanding leadership and service to the College, University, and community.

Blake Mischley

Computer Science

“During my time at the University of Michigan, I was deeply involved in various college clubs and organizations that fostered my passion for entrepreneurship and investment. I was an active member of the Michigan Investment Group, where I honed my skills in financial analysis and portfolio management. Additionally, I was part of StartUM, a community that provided resources and support for budding entrepreneurs, and participated in Entrepreneur Power Hour events, gaining valuable insights and networking opportunities. One of my proudest accomplishments came at the end of my freshman year when I co-founded MeetYourClass (www.meetyourclass.com). This venture has flourished into a platform with over 275,000 student accounts, revolutionizing the way students connect and collaborate. With the backing of Techstars, we have expanded our reach, made full-time hires, and established a growing list of partnerships with universities nationwide.”

Amy Wei

Computer Science

“I am an Instructional Assistant for the course Discrete Mathematics (EECS 203), where I teach, hold office hours, and work directly with students! I am also part of the EECS honor society Eta Kappa Nu (HKN), and the Society of Women Engineers (SWE). Outside of STEM, I play violin in the Campus Symphony Orchestra. And during a summer research program at Carnegie Mellon, I built NaNofuzz, a software testing tool intended to help programmers test their code, which is centered around accessibility and supporting the user’s needs.”

Tau Beta Pi First Year Student Award

This award recognizes exemplary character and academic achievement among new students.

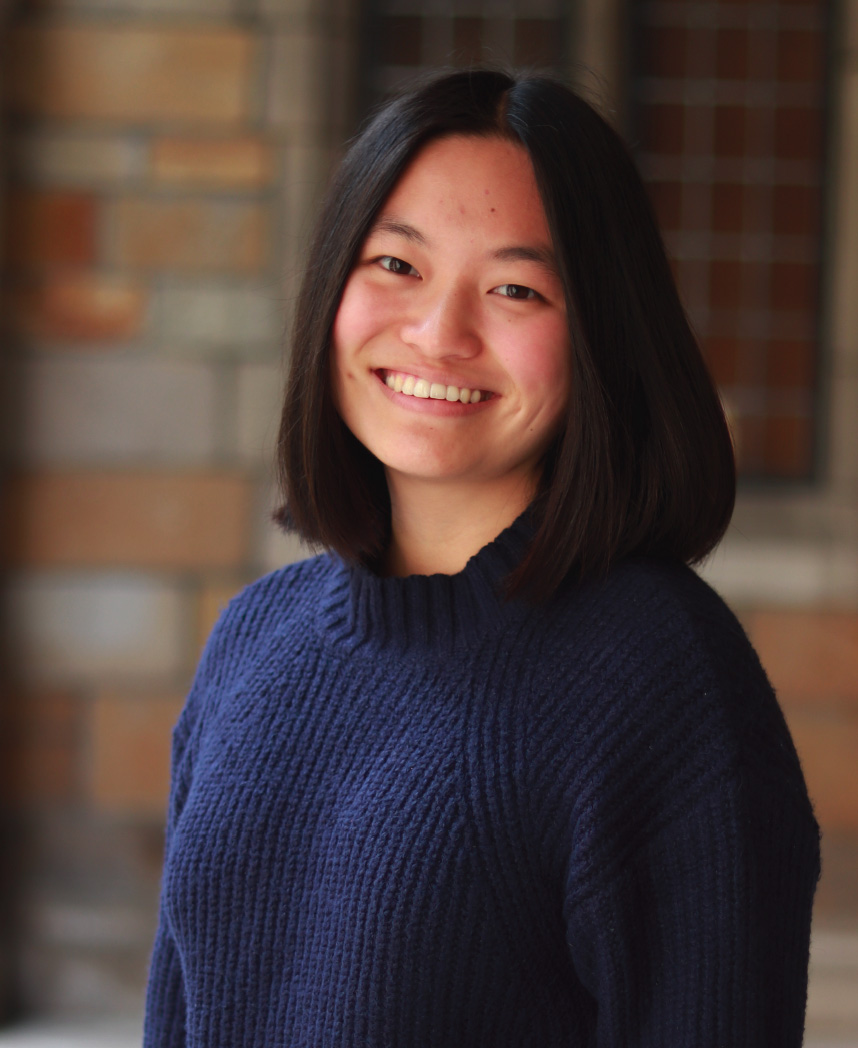

Ava Chang

Computer Engineering

Tau Beta Pi Tom S. Rice Award

This award is presented to a student who best combines exemplary character and distinguished scholarship.

Hunter Muench

Computer Science